|

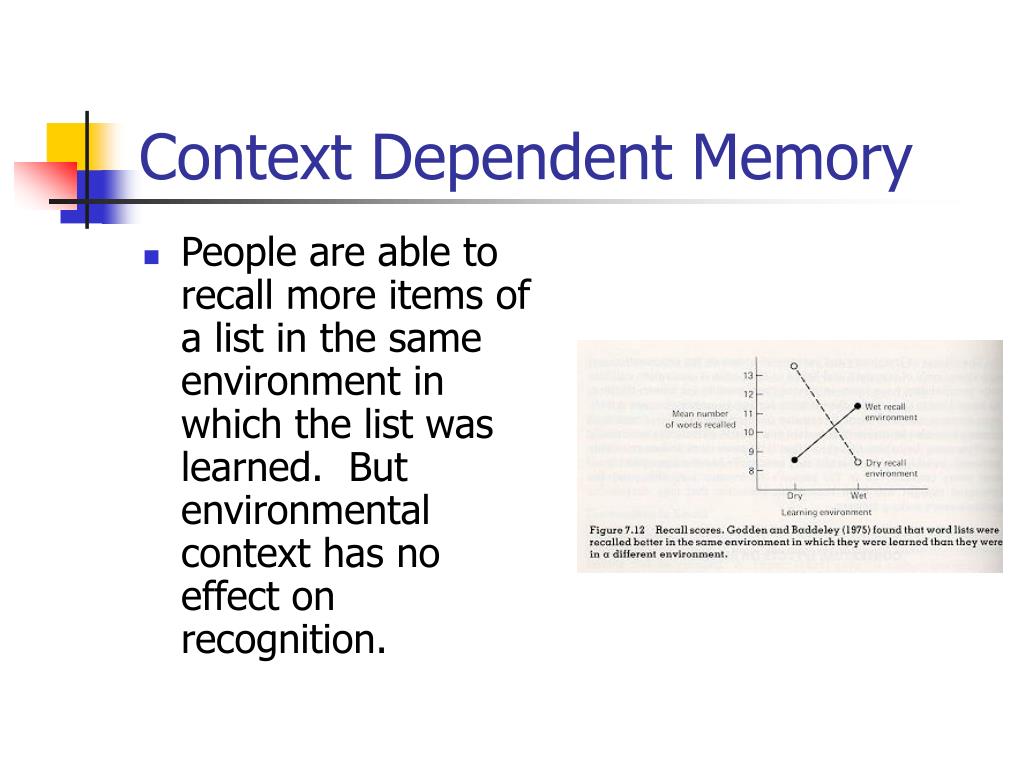

12/30/2023 0 Comments Context dependent memoryWe also investigated contextual modulation of oscillatory brain activity in order to test the effect of reinstated neural contexts, which failed to evoke a robust effect when re-tested in an internal conceptual replication study. We show convincingly that even rich, salient and fully surrounding visual contexts provided by virtual reality are not sufficient to induce effects of context-dependency in a free recall memory task. Data from 120 participants were included in three studies comprising a variety of visual cues. Here we report the results of a multi-study design investigating the influence of reinstated visual contexts on memory performance. Based on this, the widely held belief is that context-dependent memory is a strong and robust effect, with transferable substance for everyday learning and potential clinical applications. This context-dependent memory effect has been investigated over the course of several decades and has been demonstrated with many different types of contexts. Later, these strings of extra information can help retrieve the learned content as demonstrated by experiments where contextual cues from an encoding situation facilitate remembering and improve memory performance when reinstated during retrieval. When learning new information, contextual information about the encoding situation is stored in addition to the focal memory content. Teachers should be aware of the influence of contextual auditory cues in the classroom setting, and how this information is stored along with the focus of the teaching lesson. Further, (auditory) CDM effects can be found in young children. Sounds, not just musical pieces, are stored in memory and can be effective contextual mnemonic cues. Across two experiments we found that the reinstatement of the auditory context improved memory performance for 2 nd, 3 rd, and 5 th grade students. We were interested in determining whether auditory CDM effects could be found in a classroom setting in school-aged children using background noises. In relation to auditory contexts, much of the previous research has focused on music and on adults. Most previous CDM experiments have focused on spatial location, but contexts such as sights, smells, and sounds have also been shown to be effective mnemonic cues, although the research is more limited. The present results show that repetition within the same video or photograph context, covering the entire background of the video screen on which each item pair was superimposed, facilitates paired-associate learning.Ĭontext-dependent memory (CDM) is the effect whereby information is retrieved more accurately in the presence of the contextual information that was present during encoding than in the absence of that contextual information. The retention interval did not interact with the effect of the background. There was no significant difference in learning performance between video and photograph background contexts, which were significantly better than gray or spot illustration backgrounds which did not differ from each other. The repetition of face-name pairs within the same complex incidental environmental context on the computer screen (either video or photograph background) facilitated the paired-associate learning. We added a new condition of spot illustrations, and a second testing one day later. Experiment 3 investigated whether the incidental environmental context similarly facilitated face-name paired-associate learning. Both the video and the photograph contexts equally facilitated the paired-associate learning compared to the gray background. Experiment 2 investigated learning of paired-associate foreign and native words in the same video-contexts, or photograph-contexts, or on a neutral gray background.

Repetition in the same context resulted in better learning than in different contexts, evaluated with a paper-and-pencil test. Experiment 1 compared the learning of foreign and native language words between a constant context condition, where the stimulus and response pairs were presented twice on the same 5-s video background context, and a varied context condition, where the pairs were presented twice on different video contexts. Three experiments, in which a total of 198 undergraduates engaged, investigate whether the incidental environmental context on the computer screen influences paired-associate learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed